The checkpoint to master Generative AI on AWS is understanding how AI systems behave in production, not just calling APIs or copying sample code. Here is your complete guide to how developers actually build, operate, and scale building Generative AI on AWS in 2026, and how this aligns with AIP-C01 certification and the AWS Certified Generative AI Developer – professional practice exam.

In this blog, you will learn about the changes in AWS AI architecture, how organizations are using AI differently now, what certification validates it, who that is for, and how to be ready with an official learning path and hands-on resources. Let’s deep dive!

Table of Contents

Why is there a demand for Generative AI on AWS in 2026?

All modern cloud systems will use generative AI as the application layer by 2026. Companies are making AI-first architectures the norm to raise productivity, decrease costs, make choices automatically, and make the customer experience better.

AWS has emerged as a preferred platform for Generative AI on AWS because it provides:

- Managed foundation models via Amazon Bedrock

- Secure data integration with S3, OpenSearch, and databases

- Scalable inference using serverless architectures

- Built-in governance, logging, and monitoring

- Cost control and pay-as-you-go pricing

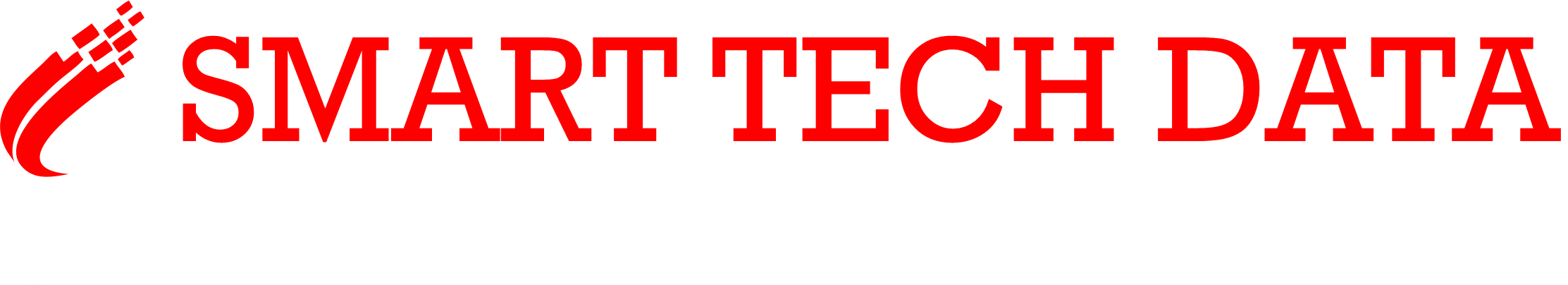

Traditional software relied on hard-coded logic. Modern AI systems rely on models, data, orchestration, and governance. Organisations now expect developers to:

- Integrate LLMs into business applications

- Manage embeddings and vector databases

- Implement Retrieval-Augmented Generation (RAG)

- Handle hallucination risks

- Monitor AI quality, cost, and security

When manual AI pipelines struggle to scale, AWS handles this cleanly using AWS Bedrock Generative AI, SageMaker, and serverless services. This is why enterprises standardise AI workflows across Cloud, DevOps, and Platform Engineering teams.

What industry signals here?

The industry signal is clear and consistent:

- Generative AI skills are now core requirements for Cloud Engineers, AI Developers, and Platform Engineers.

- There is strong hiring demand across the US, India, and the EU for AWS AI professionals.

- Enterprises widely use AWS for AI in cloud migration, analytics, automation, and customer support.

- The AIP-C01 certification has shifted from “nice-to-have” to a baseline credibility signal.

- The AWS Certified Generative AI Developer – professional practice test is becoming a key differentiator for senior roles.

For learners, AIP-C01 is one of the fastest, and most practical, certifications to validate real AI-on-cloud skills. It maps directly to production workflows such as RAG, embeddings, model selection, cost optimization, and governance.

If you are preparing for cloud or AI roles in 2026, building Generative AI on AWS is now Foundational.

Overview

Generative AI skills are becoming as fundamental as cloud networking was a decade ago. AWS’s approach, centered on Amazon Bedrock + SageMaker + serverless architecture, reflects how AI is built in real enterprises today.

This ecosystem ensures Safe model usage, Secure data handling, Scalable deployment, Real-time monitoring, and Team collaboration.

What is the AIP-C01 Certification?

The AIP-C01 certification validates your ability to understand, design, and work with AI services on AWS. It is designed for professionals who want to:

- Select appropriate foundation models

- Design AI architectures

- Apply responsible AI principles

- Integrate AI into business workflows

This is not a purely theoretical exam, and it tests real-world decision-making.

What does the AIP-C01 Certification validate?

The exam validates your ability to:

- Explain Generative AI on AWS fundamentals

- Choose the right foundation model for a use case

- Understand RAG and embeddings

- Design secure AI architectures

- Estimate cost-performance trade-offs

- Apply responsible AI and governance

Exam Format at a Glance

| Attribute | Details |

| Duration | 120 minutes |

| Format | Online proctored |

| Question types | Multiple choice & multi-select |

| Validity | 3 years |

| Level | Foundational-to-intermediate |

Key takeaway

This examination assesses your understanding of AI design, risk, cost, security, and scalability in actual workflows; it is not a test of memory.

What Has Changed Between Generative and Traditional AI on AWS?

Prediction was the main focus of traditional AI. Modern generative AI focuses on creation + reasoning + retrieval + orchestration.

Why this major shift?

- Moves beyond basic ML into LLM-powered systems

- Emphasizes data quality and retrieval

- Focuses on security, governance, and cost control

- Mirrors real enterprise AI pipelines

Foundation Models Available in Amazon Bedrock

1. AWS Bedrock Generative AI

It is the primary entry point for most AI applications on AWS today. It provides secure, managed access to foundation models, including:

2. Amazon Titan

Amazon’s own family of foundation models in Bedrock is designed for secure enterprise use cases like text generation, embeddings, and search with strong privacy controls.

3. Anthropic Claude

A high-capability conversational LLM focused on safety, reasoning, and long-context analysis, widely used for chatbots, document analysis, and enterprise assistants.

4. Meta Llama

An open-weight, highly flexible, large language model from Meta, popular for customization, research, and scalable application development.

5. Stability AI

A creative AI model provider best known for high-quality image generation and multimodal (text + image) capabilities.

With Bedrock, developers can:

- Generate text, images, and embeddings

- Fine-tune models on proprietary data

- Implement RAG workflows

- Build chatbots, copilots, and AI assistants

Why developers prefer Bedrock?

The following are the reasons why developers prefer Bedrock:

- No infrastructure management

Developers can access and use foundation models without provisioning GPUs, configuring scaling, or maintaining model servers. This lets teams focus on building applications instead of managing ML infrastructure.

● Built-in security

Bedrock integrates with AWS IAM, encryption, and private networking. Data stays within the AWS environment and is not used to retrain base models, which helps meet enterprise security expectations.

● Enterprise-grade governance

Guardrails, access control, and monitoring features allow organizations to manage model usage, apply safety policies, and track activity across teams and applications.

● Pay-as-you-go pricing

You pay only for model inference and usage rather than maintaining expensive hardware, making it cost efficient for both experimentation and production workloads.

This makes Bedrock the backbone of AWS LLM application development.

AWS LLM Application Development on AWS

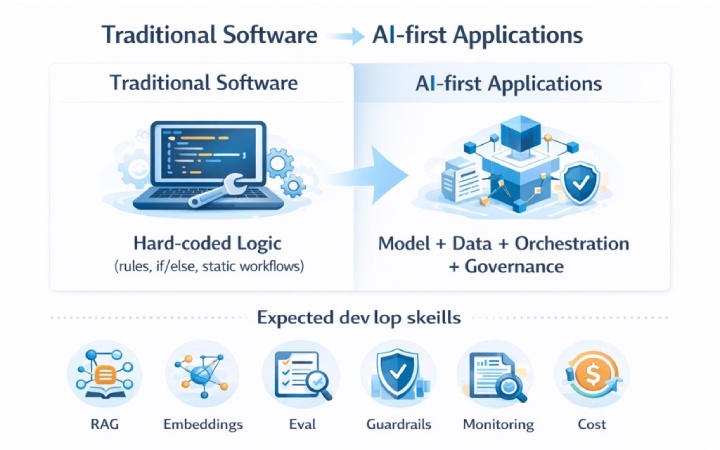

When building AWS LLM application development systems, developers typically use:

- Amazon Bedrock → Foundation models

- AWS Lambda → Serverless logic

- API Gateway → API exposure

- OpenSearch or Vector DB → Semantic search

- S3 or RDS → Data storage

This pattern enables scalable, secure, and cost-efficient AI applications.

How Generative AI Architecture Systems Are Designed on AWS?

A typical Generative AI architecture on AWS follows this flow:

- User sends a request via web/mobile app

- API Gateway triggers AWS Lambda

- Lambda retrieves context from a vector database

- Bedrock generates an AI response

- The response is returned to the user

This architecture is used for:

- AI chatbots

- Enterprise search assistants

- Automated content generators

- Smart document processing systems

AWS Generative AI Use Cases in 2026

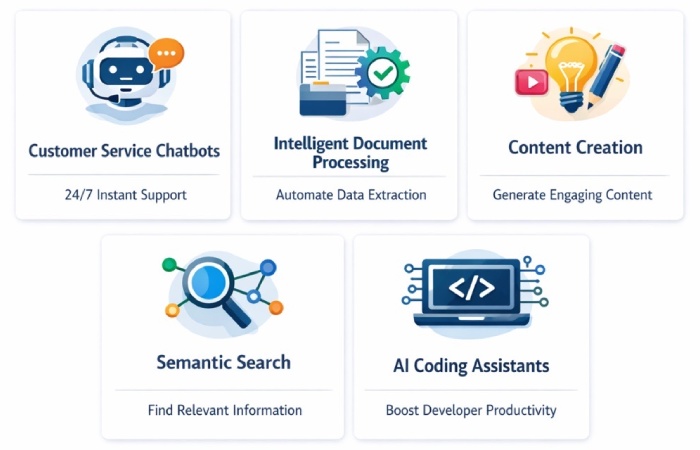

Here are real-world AWS generative AI use cases that developers are implementing today:

1) AI chatbots for customer service

Modern customer service is evolving away from rule-based bots and toward conversational AI solutions built on AWS. With Amazon Bedrock, Lambda, and vector databases, businesses are making chatbots that can interpret natural language, get useful information from internal knowledge bases, and give correct, context-aware answers right away.

These AI assistants are different from regular chatbots since they can manage complicated questions, follow discussion threads, and even smartly escalate problems when they need to. This lowers the number of support tickets, makes customers happier, and lets human agents work on more important issues.

2) Processing Document Intelligently

Businesses make a lot of documents, such as contracts, invoices, legal agreements, research reports, and compliance files. With AWS Retrieval-Augmented Generation (RAG), developers are building systems that automatically analyze and summarize documents.

When companies utilize Amazon Bedrock with services like S3 and OpenSearch, they may get crucial information, find hazards, highlight important clauses, and provide short summaries. This is especially useful in the legal, financial, and healthcare fields, where reviewing documents by hand takes a lot of time and money.

3) Content Creation using AI

AWS generative AI is helping marketing, product, and content organizations automate and grow the generation of content. Teams can use Bedrock-powered apps to make high-quality drafts based on prompts and brand guidelines instead of writing every blog post, social media post, or product description by hand.

Developers typically add these technologies to their internal processes so that marketers may improve AI-generated content instead of beginning from zero. This makes it faster to build campaigns while keeping them consistent and creative.

4) Semantic Search Systems

When people don’t know the exact words they need, traditional keyword search doesn’t always work. AWS generative AI lets systems do semantic search, which means they can grasp what a query means instead of just matching words.

Companies can make smart search engines that find the most relevant documents, emails, or knowledge articles, even if the words are different, by putting embeddings in vector databases and combining them with Bedrock models. This is a common feature in HR systems, enterprise knowledge management, and research platforms.

5) AI Coding Assistants

More developers are utilizing AWS-powered AI technologies to make their code better and more productive. AI coding assistants can help you write code by suggesting code snippets, finding and fixing bugs, explaining complicated logic, and improving efficiency.

When used with AWS development environments, these help engineers produce cleaner code more quickly, cut down on defects, and speed up development cycles. This is quite helpful for big teams that are creating cloud-native apps.

AWS Generative AI Developer Skills You Need

To be successful in developing generative AI on AWS, developers should be experts in:

- Python programming is still the major language for making AI apps on AWS. Python is the language used to write most LLM integrations, data pipelines, and automation workflows. This means that you need to know how to use it to deal with Amazon Bedrock, SageMaker, and AWS SDKs.

- Prompt engineering is a very important ability since the way inquiries and instructions are worded has a big effect on how well AI responds. To make AI systems dependable and effective in real business situations, developers need to know how to structure prompts, give context, and improve outputs.

- To make semantic search and smart retrieval possible, you need to know what vector embeddings are. AI systems can use embeddings to figure out how chunks of text are related to each other. This is important for making search and recommendation systems that work well.

- RAG architecture (Retrieval-Augmented Generation) is now a must-have skill. RAG allows AI replies to be based on real organizational data, which makes them less likely to be wrong and more accurate by integrating stored knowledge with LLM skills.

- To make sure that AI apps are safe and follow the rules, you need to know a lot about AWS IAM security. Developers need to know how to set up roles, rights, and access policies so that only approved users and services may work with AI models and sensitive data.

- It is important to have experience with API development because most generative AI systems are developed as services that talk to each other through APIs. Using AWS Lambda and API Gateway, developers need to make APIs that can grow, are dependable, and are well-organized.

- Model assessment skills let engineers check the quality of AI responses over time, measure how well it works, and find bias. This includes checking outputs, keeping track of accuracy, and making the system work better all the time.

- Finally, being able to optimize costs is an important ability for developing AWS Generative AI. At scale, LLM calls, embeddings, and storage can get expensive, so developers need to know how to design architectures that are both fast and cheap.

These skills are some of the most important ones that the AWS Certified Generative AI Developer-Professional practice exam tests, so you need to know them for both real-world work and passing the exam.

AWS AI Services for Developers: Core Stack

| AWS Service | Purpose |

| Amazon Bedrock | Foundation models |

| Amazon SageMaker | Train & deploy models |

| AWS Lambda | Serverless AI logic |

| API Gateway | Expose AI APIs |

| OpenSearch | Vector search |

| Amazon S3 | Data storage |

| CloudWatch | Monitoring |

Mastering this stack is essential for AWS AI services for developers.

What to Expect After Certification?

Certified professionals can:

- Design AI architectures

- Implement RAG pipelines

- Secure AI workloads

- Optimize model costs

- Deploy scalable AI apps

This proves operational readiness, not just theory.

Study materials for the AIP-C01 exam

Free Resources

Here are some free resources you can use:

- The official AWS documentation

- Blogs about AWS Bedrock

- Tutorials on YouTube

- Projects in the AWS free tier

Paid Resources

You can also get paid resources for thorough practice for the Generative AI AWS exam:

Whizlabs practice exams

Provide exam-style scenario questions that help you understand how AWS tests architecture decisions, not just definitions. Detailed explanations help identify weak areas and improve reasoning.

Guided AWS labs

Step-by-step labs let you build real solutions using services like Bedrock, Lambda, and storage integrations. This helps you connect theory with real implementation.

Structured AI courses

Organized learning paths cover concepts in the right order, from fundamentals to architecture patterns, so you avoid random preparation and confusion.

Hands-on practice matters more than reading

The exam evaluates practical decision making. Building and testing solutions improves retention and confidence far more than only studying notes.

How to Prepare for AIP-C01 in 2026?

The simple way to focus on the AIP-C01 exam is to get experience with real AWS use cases, building AI systems, and understanding how generative AI behaves in production. Below are ways you can acquaint yourself with the topic.

Hands-on AWS labs

Spend time actually building on AWS instead of only reading theory. Work with real services like Lambda, S3, API Gateway, and Bedrock.

Bedrock experimentation

Actively test different foundation models available in Amazon Bedrock. Compare outputs, costs, and performance for real business scenarios.

Building a chatbot

Create a simple AI assistant that can answer user questions. Integrate Bedrock with a web or API-based interface to mimic production apps.

Implementing RAG

Store documents in a database and retrieve relevant context dynamically. Use that context with Bedrock to generate accurate, grounded responses.

Understanding embeddings

Learn how text is converted into numerical vectors for semantic search. Practice using embeddings to improve search relevance and AI accuracy.

8-Week AIP-C01 Study Plan

When studying for the AIP-C01 exam, having a well-organized study strategy is more important than just working hard. Here is a useful 8-week plan that is in line with the most important things to study for the exam and shows how generative AI is actually constructed and run on AWS in real-world situations.

Weeks 1 to 2: Core Foundations

During the first two weeks, your focus should be on developing a solid foundational understanding of Generative AI concepts on AWS:

- Learn AWS basics

- Understand Bedrock

- Study LLM concepts

Week 3: Architecture and Application Design

In the third week, your goal is to understand how generative AI applications scale beyond simple prompts. Focus on how real AWS systems are structured rather than isolated experiments.

Concentrate on the following aspects:

- Core components of Generative AI architecture on AWS

- How Amazon Bedrock integrates with Lambda and API Gateway

- Data flow between applications, storage, and models

- Designing end-to-end AI application patterns for real use cases

Week 4: RAG, Embeddings, and Data Handling

In this phase, you must understand how data becomes the source of truth for AI systems and how to retrieve it reliably. This is a high-weightage area for AIP-C01.

You should master:

- Purpose of embeddings and why they matter

- How vector stores enable semantic search

- Retrieval-Augmented Generation (RAG) processes

- Linking Bedrock to S3, OpenSearch, or databases

This week is very important since the examination will really test your comprehension of how AI works with real business data instead of just models.

Week 5: Security, Cost, and Responsible AI

As you get closer to the exam, focus on concepts that ensure safe, compliant, and cost-effective AI systems.

Prioritize:

- AWS IAM roles, policies, and least privilege access

- Data privacy, encryption, and model access controls

- Monitoring AI usage with CloudWatch

- Cost optimisation strategies for LLM calls and embeddings

This is where you shift from “building AI” to “operating AI safely in production.”

Week 6: AWS AI Services Integration

In this phase, you focus on:

- How Amazon Bedrock fits with other AWS AI services for developers

- Comparing Bedrock vs SageMaker use cases

- Understanding when to use managed AI services vs custom ML workflows

Week 7: Real-World Use Cases and Practice

Focus on:

- Reviewing real-world AWS Generative AI use cases

- Hands-on labs covering architecture → RAG → security patterns

- Taking practice exams and analysing weak areas

Week 8: Final Revision and Exam Readiness

You connect everything you have learned into a complete, production-ready mindset during this week and focus on:

- Revising core concepts and architecture patterns

- Reattempting difficult practice questions

- Improving speed and decision making for scenario-based questions

You slowly become an expert in the flow with a systematic six-week approach that includes theory, hands-on labs, and practice tests after each module. Architecture → RAG → Security & Cost → AWS AI Services → Getting ready for the test. This is very similar to how real businesses build and run Generative AI on AWS, and it fits very well with what the AIP-C01 exam expects from candidates.

How to Approach the AIP-C01 Exam?

Read questions carefully

Pay attention to all the keywords like latency, cost, data privacy, and model accuracy in the given questions. Small details often indicate the actual AWS service or architecture pattern.

Think about real production risks

Consider failure handling, hallucination risk, monitoring, and model safety. The correct choice usually reflects a reliable real-world AI deployment.

Prefer secure and scalable solutions

Opt for managed services, guardrails, and least-privilege access. Designs should support scaling users, data volume, and inference load.

Avoid shortcuts

Temporary fixes, manual workflows, or unmanaged infrastructure are rarely correct. AWS solutions typically emphasize automation and operational simplicity.

If it does not work in real life, it is probably not the correct option. Pick the option you would confidently deploy in a production generative AI application.

Common Mistakes Candidates Make

Many learners approach AIP-C01 like a traditional theory exam, but it is designed to test how you reason about AI systems, data, security, and operations at scale.

1. Ignoring data quality

Candidates focus too much on models and forget that poor data directly leads to unreliable AI outputs in real systems.

2. Overcomplicating prompts

Many learners write long, complex prompts instead of clear, structured ones that work consistently in production.

3. Skipping monitoring

Candidates design AI systems but neglect CloudWatch logging, cost tracking, and performance observability over time.

4. Poor security design

Weak IAM roles and access controls expose sensitive data and show a limited understanding of enterprise AI risk.

5. No hands-on practice

Relying only on theory without real Bedrock, embeddings, or RAG experience leads to weak exam judgment.

If your preparation focuses only on concepts, ignoring the real-world use cases, then you may struggle.

Industry Shift This Exam Represents

AI has altered the way systems are created on AWS in a big way by moving from isolated machine learning models to intelligent platforms that are ready for business. The AIP-C01 exam now focuses more on AI as an operational system than as a one-time experiment.

This shift highlights:

- ML models → end-to-end AI systems

- Static applications → intelligent, adaptive applications

- Manual operations → automated, AI-driven workflows

This makes AIP-C01 a critical foundational certification for professionals preparing for cloud, AI, and platform roles in 2026.

AWS Generative AI Certification Path

A recommended AWS Generative AI certification path looks like this:

- AWS Cloud Practitioner – Cloud basics

- AIP-C01 (AI Practitioner) – AI fundamentals

- AWS Machine Learning Specialty – Advanced ML

- AWS Certified Generative AI Developer – Professional – Expert level

Each step builds deeper expertise in cloud-based AI development.

AIP-C01 FAQ

- Should I take AIP-C01?

Yes. If you wish to use AWS AI services like Amazon Bedrock, embeddings, and RAG-based systems for real business. AIP-C01 is a good and powerful place to start. It lays the groundwork for your ideas before you move on to more advanced Generative AI jobs. - How tough is the AIP-C01 exam?

The exam doesn’t have a lot of coding, but it is hard to understand. It tests how well you can use real-world Generative AI systems on AWS instead of how many service features you can remember.

- What is the duration of the exam?

You have 120 minutes to take the AIP-C01 exam, which gives you plenty of time to think about the scenario-based questions. - What is the ideal preparation time?

Most people learn in a disciplined fashion for 4 to 6 weeks before they start using AWS services like Bedrock, Lambda, and vector storage. - Do I need to do hands-on labs?

It is not necessary, but hands-on experience with real AWS AI workflows makes a big difference in how confident you feel about the exam and how ready you are for a real job.

- Who Should Take AIP-C01?

Cloud Engineers, AI Developers, Data Engineers, DevOps Engineers, and Platform Engineers who use AWS services like Bedrock, embeddings, and serverless architectures to build, integrate, automate, or manage AI-driven applications and data systems.

- Should complete beginners with no cloud experience take AIP-C01?

No. If you have never used AWS or cloud computing before, it is best to start with the AWS Cloud Practitioner. - Is AIP-C01 right for purely theoretical learners?

No. This exam is based on real-world situations and production, so people who only want to learn theory without doing anything may have a hard time. - Is AIP-C01 Worth It in 2026?

Yes, it’s completely worth the effort and time as it boosts credibility, improves employability, signals real AI skills, and aligns with enterprise needs.

Crack the AIP-C01 exam smartly!

AWS is at the forefront of generative AI, which is changing how cloud development works. You can build real AI systems, not just talk about them, if you pass the AIP-C01 certification and the AWS Certified Generative AI developer – professional practice exam.

Prepare with Whizlabs practice tests, hands-on labs, and real AWS projects, and gain confidence to pick up the career momentum. So, why wait? Ace the exam and build the AI systems the right way that the organizations expect!